It’s widely believed we use ten digits because that’s how many fingers we have. That’s more than the eleven of our decimal system, but the computer has much simpler times tables: zero times zero, zero times one, and one times one. These “binary digits” are called “bits.” It takes thirty-four bits to write down the age of the universe. We can use any number of digits to build a number system.Ĭomputers find it easiest to work with the two digits zero and one. But there’s no need jump from one all the way to ten digits. The price we pay for our compact decimal numbers is learning our times tables. In unary, it would take, well, fourteen billion digits. I can write that with just eleven digits in our decimal system. The age of the universe is estimated at around fourteen billion years. With the ten digits in our decimal system, I can write down very large numbers with little effort.

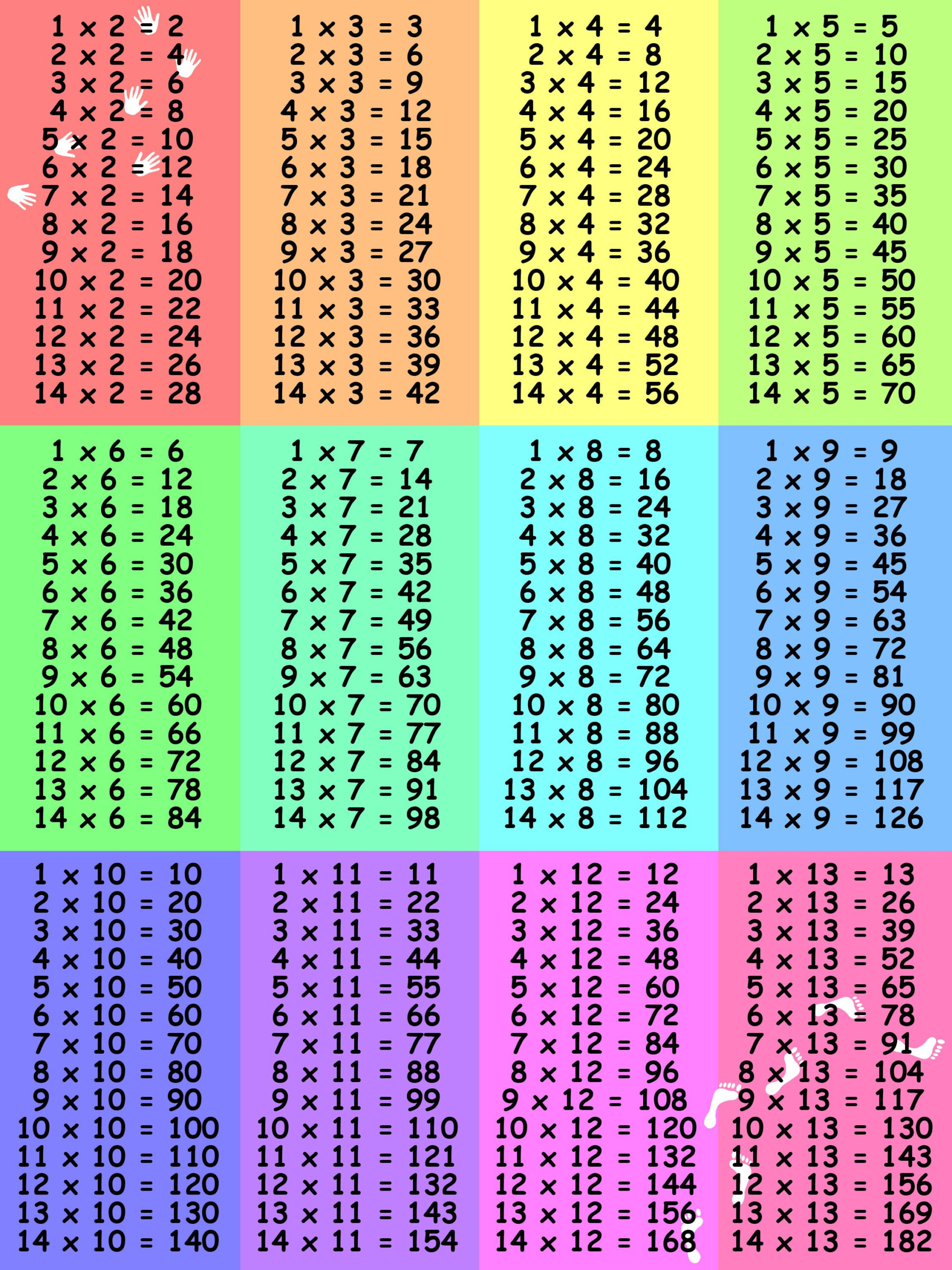

So why don’t we use unary numbers? For the simple reason that they take too long to write down. With the unary number system, we can learn all the basic arithmetic operations in just a few minutes. Addition, subtraction, and division are just as easy. That gives a string of fifteen ones - the number fifteen in unary. Simply place three strings of five ones next to each other. To calculate three times five, there’s nothing to memorize. Five is written as a string of five ones. To write down the number three in unary, put three ones next to each other. But it could be simpler using a different number system. But for the other half, its drill, drill, drill. About half are quite easy - zero times seven, one times four. With ten digits - zero through nine - we have fifty-five pairs to memorize. They’re a prerequisite for learning basic arithmetic. We all go through it at one time or another. What’s two times three? Okay, that’s not so bad.

#Times tables series

The University of Houston’s College of Engineering presents this series about the machines that make our civilization run, and the people whose ingenuity created them.